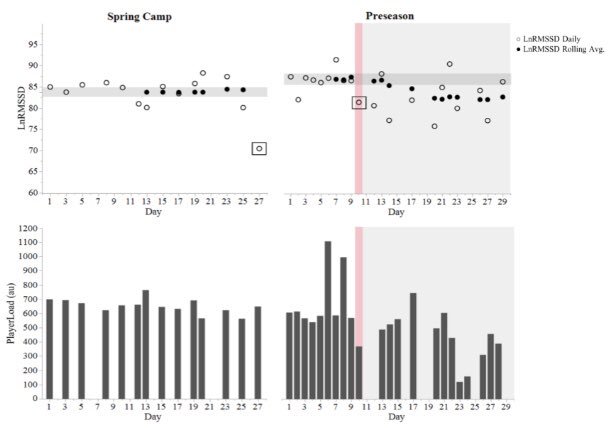

As part of my PhD work at Alabama, we tracked HRV in football players from day 1 of preseason training through to the national championship. A practical summary of some key findings follow the full-text link below.

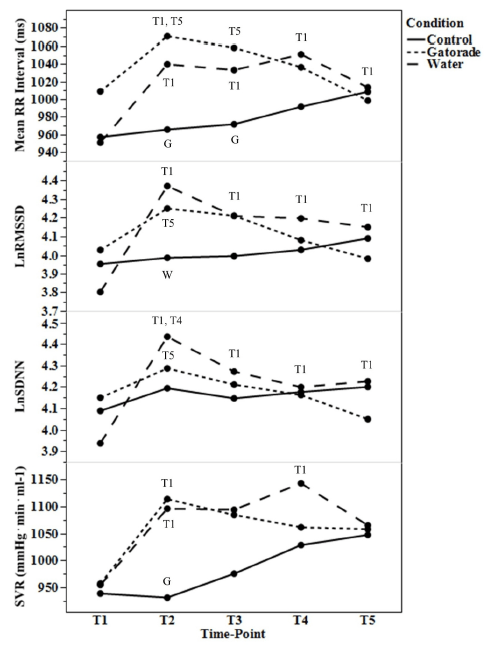

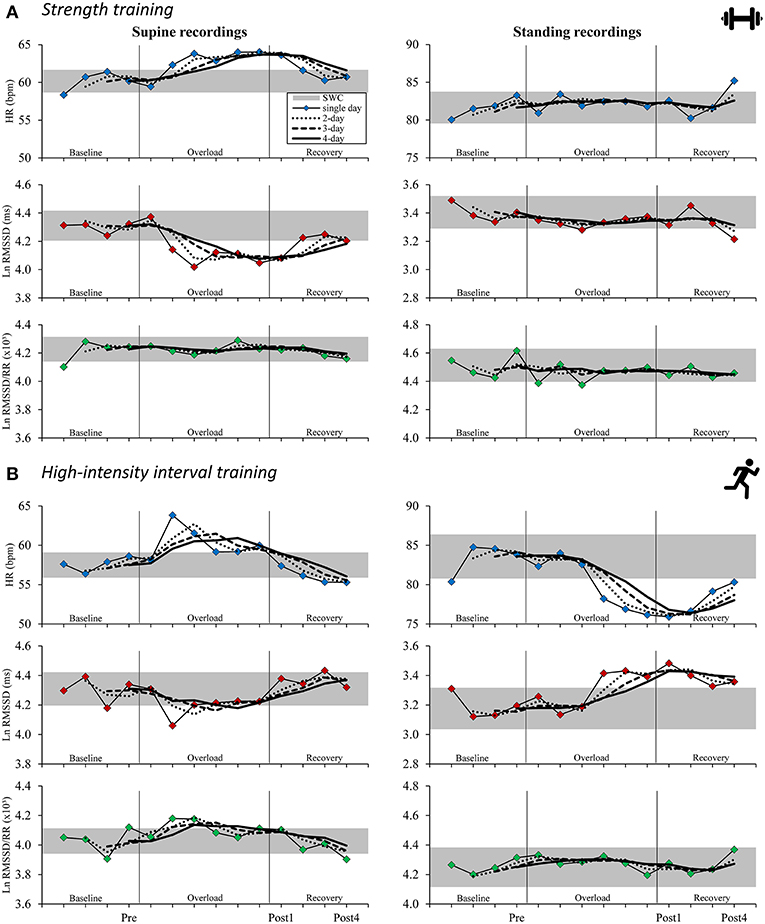

Fluctuations in HRV are expected throughout a season. However, chronically suppressed values are cause for concern. Sustained parasympathetic hypoactivity is associated with various pathological conditions and is a hallmark of stress and impaired recovery in athletes.

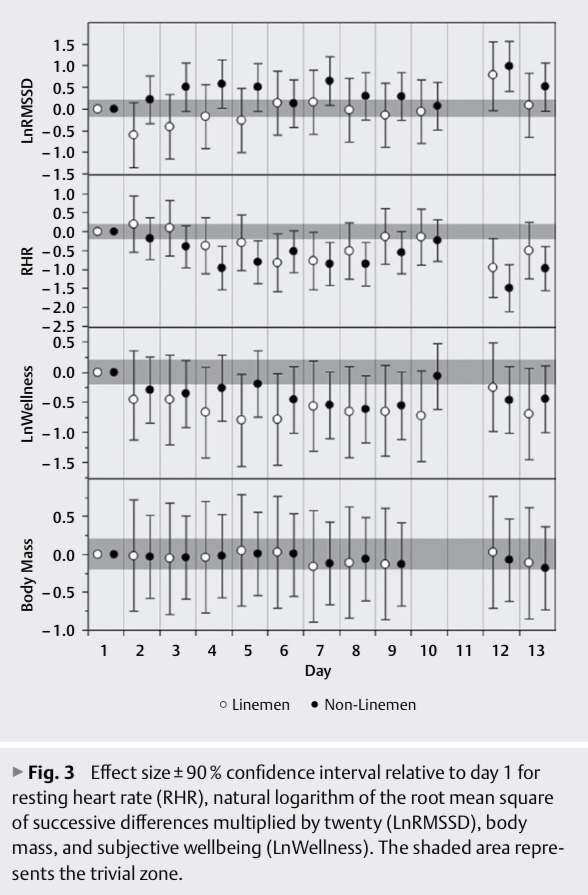

We learned from spring camp that day-to-day HRV recovery was delayed in linemen vs. the smaller and more aerobically fit skill players. Thus, we anticipated that linemen would be more susceptible to attenuated HRV throughout the season.

HRV started to decline by week 6 of the competitive period for linemen. A couple notable events occurred here: 1) the first of 5 consecutive SEC match-ups vs Top 25 nationally-ranked opponents and 2) the week of mid-term exams for many players.

Although significant group-level reductions for linemen weren’t observed until later, key players showed descending HRV by mid-season, in the absence of changes in PlayerLoad. Suppressed HRV preceded illness and injury in 2 starters. Temporary rest restored HRV.

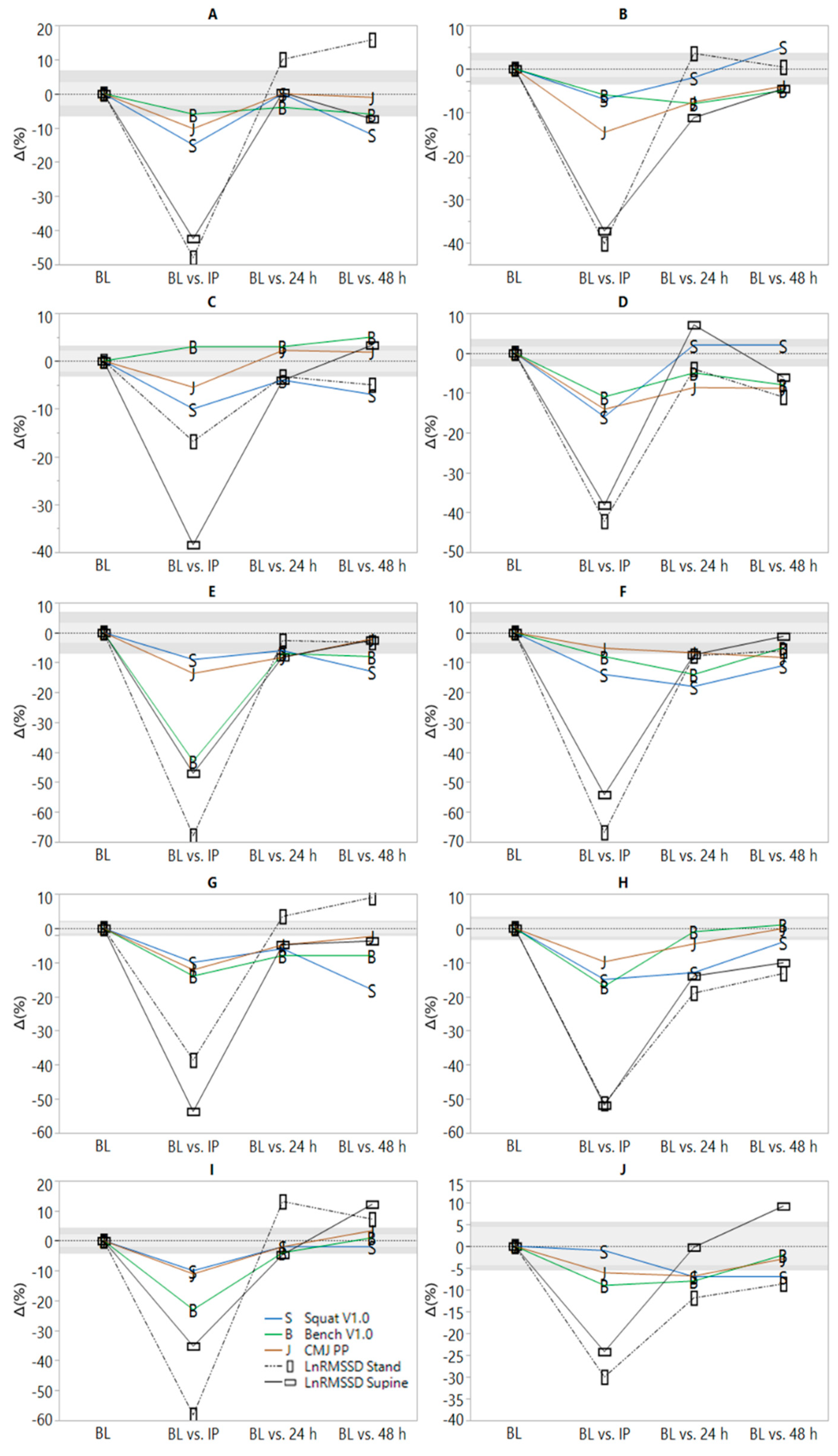

Group-level reductions occurred during an intensive camp-style preparation period for the college football playoffs following the SEC championship. Most players took a hit to their HRV, but linemen were hit the hardest. Note magnitudes of the effect sizes in the table below.

HRV remain suppressed for linemen through prep weeks for the national semi-final and the national championship. Smaller decrements (non-significant) were observed for skill players. In addition to accumulating physical stress, psycho-emotional factors (pre-competitive anxiety, pressure to perform, media attention, etc) likely contributed.

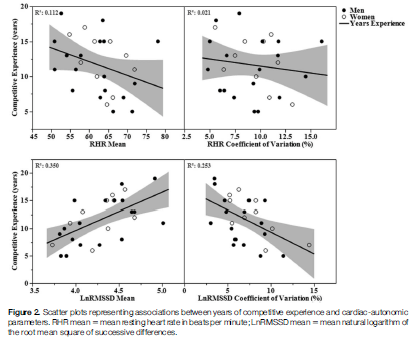

Although we emphasize the toll of a season on linemen, some skill players also showed suppressed values. The table below shows the rate of change in HRV for all players. 25% of skill and 63% of linemen showed sig. descending HRV patterns throughout the season.

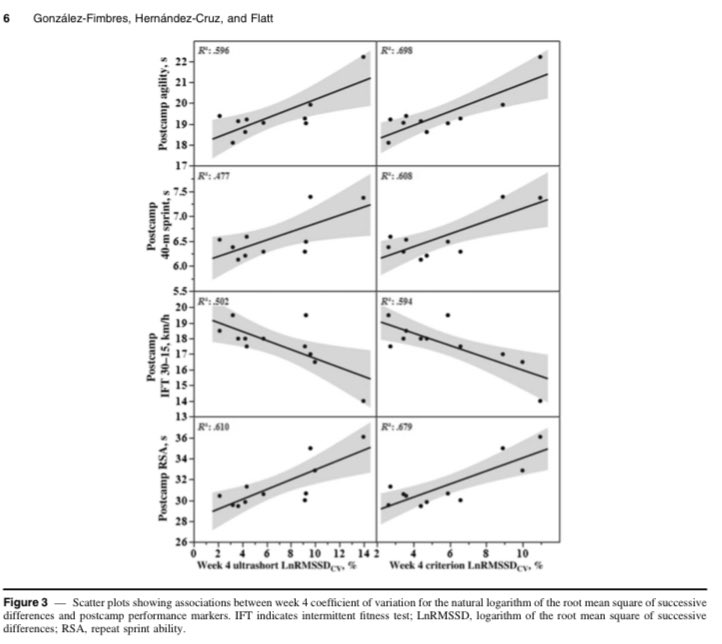

Linemen experience hypertension, arterial stiffening, and pathologic LV hypertrophy following 1 or more seasons. These maladaptations are possibly preceded by ANS imbalance. We hypothesize that larger players showing the worst HRV profiles suffer the greatest decrement in cardiovascular health markers.

If so, intervening when a decreasing HRV pattern is observed may not only be relevant to performance (limiting fatigue, injury-, and infection-risk), it may also help mitigate the cardiovascular toll of playing football at such a high level. Seeking funding to explore this in the future.

The findings highlight potential deficiencies in or greater taxation to the coping capacity of linemen vs. smaller players. Factors hypothesized to contribute to more prevalent ANS imbalance in linemen and potential implications for health and performance are summarized below.

Linemen need careful attention and monitoring. We need strategies to prevent ANS imbalance from occurring (load management, aerobic capacity, treatment of health conditions like sleep apnea, etc) and we need restorative methods to implement if it occurs.

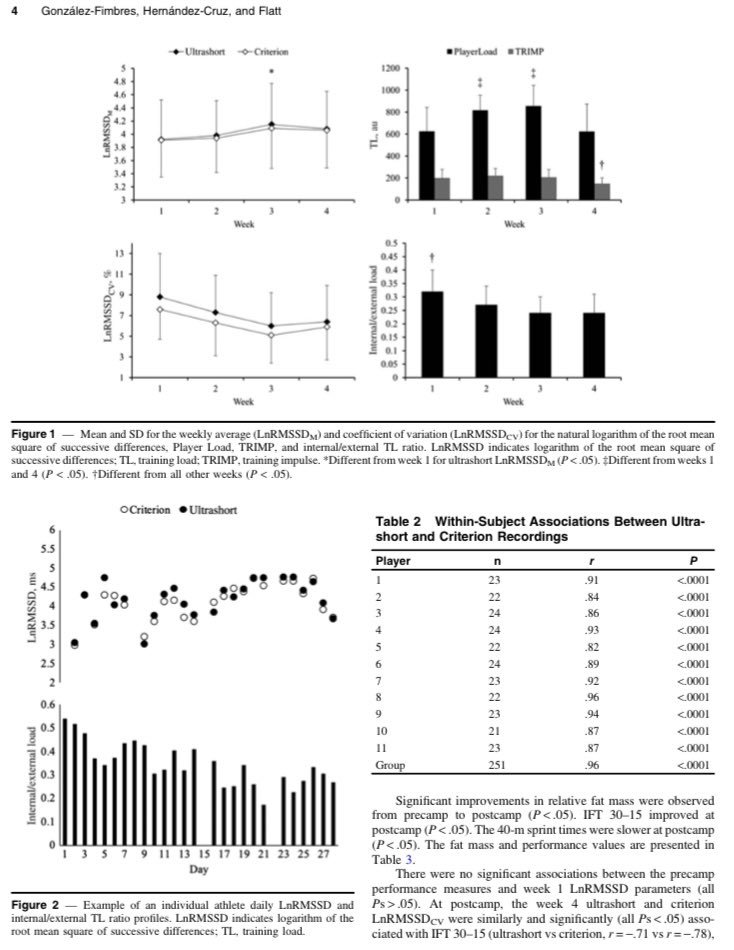

Tracking HRV with a mobile app was inexpensive and easy. Time-demand from players was ~3 min/week while waiting to get taped. Though sub-optimal relative to post-waking measures, this approach enabled timely detection of descending patterns, which may be useful for guiding interventions relevant to player health and wellbeing.

Though a better understanding of the health and performance ramifications of suppressed HRV in football players is needed, a descending pattern may serve as an easily identifiable red flag requiring attention from performance and medical staff.